The dangers of a solution in search of a problem

In the 1969 Neiman Marcus Christmas catalog there was a computer. It cost $10,600 — about $88,000 in today's dollars, more than the average American family income that year. It weighed over 100 pounds. It had no keyboard. The buyer would learn to operate it by attending the two-week programming course included in the price. She would then enter and retrieve recipes by flipping 16 toggle switches on the front of the device and watching the answers come back as patterns of blinking lights. Does 0011101000111001 mean broccoli, or carrots?

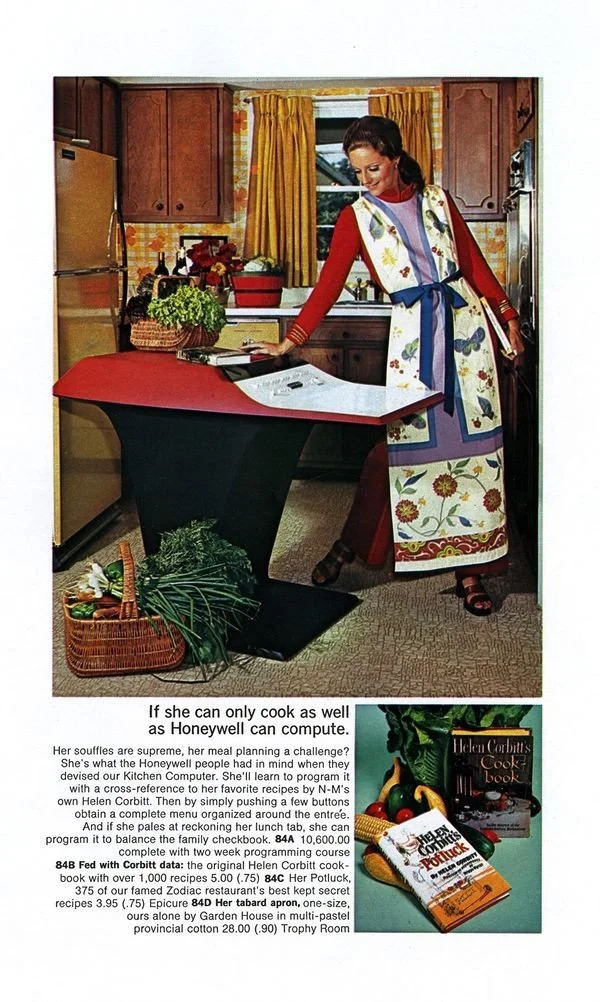

The advertising copy began: "If she can only cook as well as Honeywell can compute. Her soufflés are supreme, her meal planning a challenge?"

It was called the Honeywell Kitchen Computer. The pedestal where the housewife was supposed to balance her recipe binary and the family checkbook had a built-in cutting board.

Neiman Marcus sold zero of them.

Last post I told you about Dan Bricklin, who saw an error prone and tedious task and made a tool to make it better. The Kitchen Computer is the inverse case: it is what happens when you have a tool and go looking for a job.

The actual machine inside the floral pedestal was the Honeywell H316 — a real 16-bit minicomputer with real customers (factories, labs, universities, even a nuclear power station). Its sibling, the H516, became one of the first network nodes of the ARPANET, the predecessor to the internet. Honeywell's industrial designer, Don Kelemen, was asked to add "a 'wow' factor" to the H316 line, and the floor pedestal version was the result. Neiman Marcus saw the pedestal, slapped a cutting board on it, and put it on the cover of their Christmas catalog.

The peer-reviewed design history paper on this — Paul Atkinson's "The Curious Case of the Kitchen Computer" in the Journal of Design History (2010) — argues that the Kitchen Computer was never seriously meant to sell. It was meant to associate Honeywell with the future. Around 20 H316 pedestals were ever built. At least one ended up at a college. The Computer History Museum has one. None, as far as anyone can tell, ended up in a kitchen.

The Kitchen Computer can teach mission-driven workers three lessons.

1. An organization can decide it needs an AI before it has a problem

"Every team should be piloting at least one AI tool."

“The board wants to know what we're doing with generative AI."

“We are an AI-first organization now.” (so go figure out what to use it for)

I have heard of companies that see themselves as “forward-looking” measuring the number of tokens each worker uses and axing those who don’t use “enough.”

Without a problem to solve, AI gets assigned to an obvious workflow. Something like "summarize meeting notes" or "draft donor emails:” generic, universally-recognizable activities, the recipes-and-checkbook of 2026 office work. It doesn’t execute perfectly, workers are hesitant about AI in the first place, and it doesn’t save that much time, so dozens or hundreds of $20/mo/seat accounts go unused. Leaders see low token use and cash outflow and react by pushing harder for AI. Workers respond to top-down pressure for a purposeless and (to some) scary technology implementation about as well as you’d expect.

Start your AI implementations with a purpose, not FOMO.

2. A lot of AI products will turn out to have no market

Not every failed AI product is the founder's fault. Some are sincere attempts to build something useful that the buyers, in the end, do not want. The Humane AI Pin — a $699 magnetic chest-mounted assistant — was returned more often than it sold by summer 2024; HP bought what was left of the company for $116 million in February 2025.

The Rabbit R1, another standalone AI assistant device, launched the same year with similar fanfare and similar reviews.

There are dozens of AI-powered toys, AI-powered note-takers, and AI-powered shopping assistants you have not heard of because they shipped, sold a few thousand, and disappeared.

This is not a moral or intellectual failure. It is a predictable part of the emergence of a new, general-purpose technology. The personal computer industry has its own graveyard of devices built around the assumption that the customer would eventually want what the engineer wanted to build, all proverbially buried next to the Kitchen Computer.

For a nonprofit being pitched an AI product, remember that some of the vendors speaking to you are on the wrong side of that survival rate. Asking "who else uses this, for which specific job, and how long have they been using it?" filters out a useful share of the catalog before you spend any money.

3. Some splashy AI launches are not really meant to sell

This is Atkinson's reading of the Kitchen Computer: Honeywell's executives knew their pedestal-with-a-cutting-board would not be a real product. The point was the cover of the Neiman Marcus catalog, the press attention, the association of Honeywell with the future. By those measures, the Kitchen Computer worked. Honeywell got the splash. DEC engineer Gordon Bell started thinking seriously about home computing because of it, even after deciding the Honeywell itself would be useless for recipes.

Some past and future AI-driven product launches can fit in this category. I am not naming individual companies because I cannot read founders' minds. I am pointing out that a product can be evaluated by its creator on metrics that have nothing to do with whether you, the buyer, get value. Sometimes very popular products lose money on each sale, but gain the producer brand awareness, goodwill, and market share. When a product is winning at those other games, it does not need to win at being useful to you.

Final thoughts

The Kitchen Computer solved Honeywell's marketing problem, but it no kitchen problems. The kitchen already had a recipe box on the counter that worked fine.

If you are in a mission-driven organization being asked to evaluate an AI product, an internal initiative, or an external pitch, the most useful thing you can do for everyone involved is to find the job first. Define a problem. Decide what "better" would look like. Then go find the tool (or find the evidence that you do not need one).

—

I asked Claude to write up something on the Honeywell product that would be useful today, in case it was interesting. At first, it wrote an article comparing it to the AI pin, but it wasn’t a great match or argument. However, as I was reading it, I thought of the other two points, and requested: “I am not sure about the AI pin comparison. What about using it to say that there will be 1) occaisions in organizations where people want to implement AI but don't have a problem to solve. 2) a wide array of products using AI that turn out to have no market 3) perhaps, consumer AI companies creating products without enough demand, but knowing they will make a splash and get people talking.” I have heard of companies that see themselves as “forward-looking” using “not enough tokens used” as a metric to decide who gets laid off. To avoid the Honeywell Kitchen Computer problem, your AI implementation should start with a purpose.

Claude’s output in this case required a lot of editing, especially under the first point and especially in the order it presented ideas.